JazzXR

How can we visually memorize the sound of our music by painting our physical wall?

Role

Product Designer

Art Director

Front-end developer

UX and Interaction designer

Team

Jay-ai Locsin (Engineer)

Timothy Wang (Lead)

Austin Peng (Product Manager)

Time

3 week

Skills

Apple Vision Pro Development

Unity

Figma

Austin Peng (Product Manager)

Problem!

Synesthetic thinkers see music in their minds, but in reality, it remains invisible and fleeting. How can we bring this imagined experience to life?

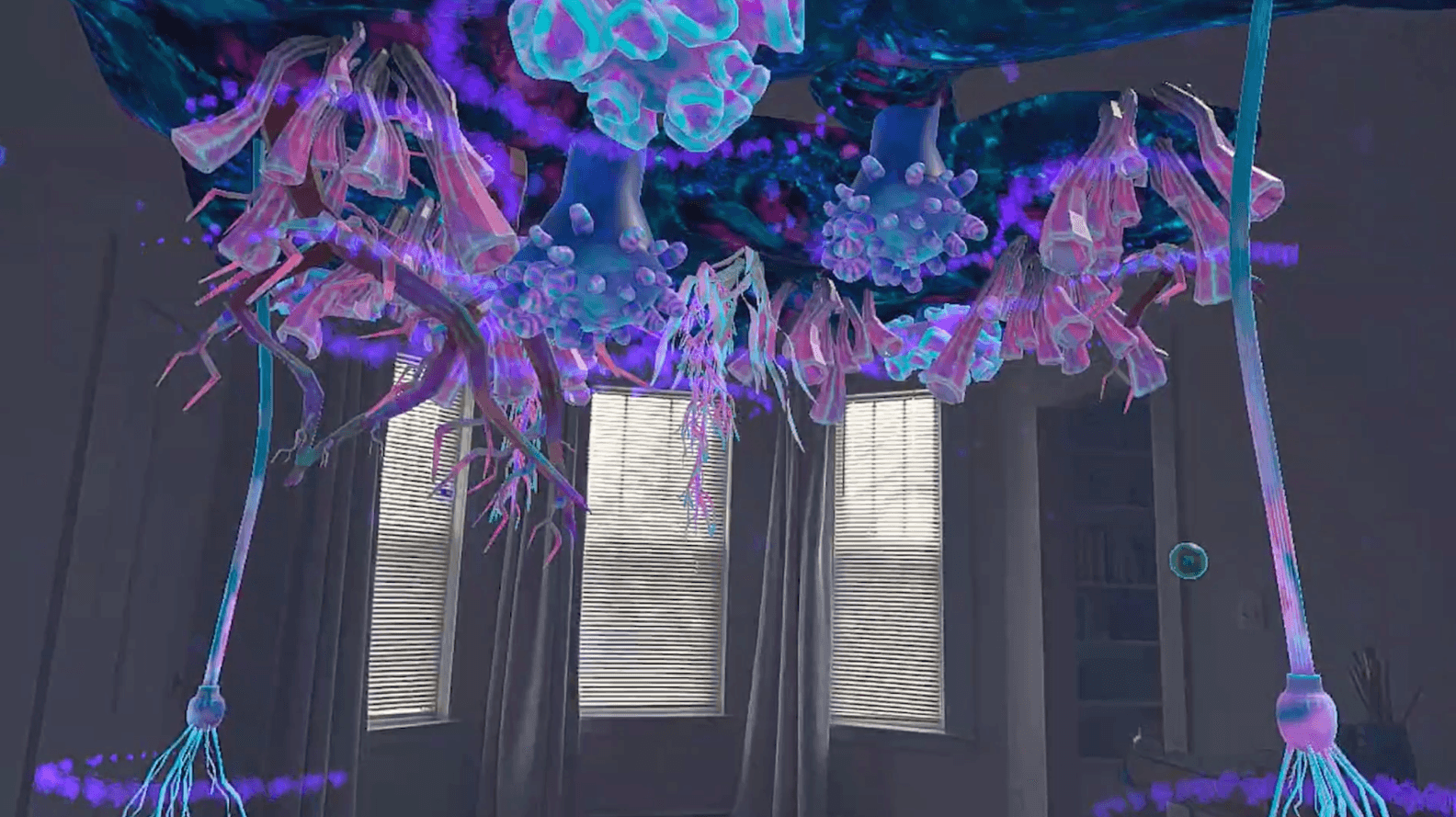

Answer!

JazzXR turns sound into living visuals using Apple Vision Pro. As a saxophonist plays, bubbles emerge, shaped by pitch, articulation, and intensity, painting the walls with color and motion. This transforms music into a tangible, immersive experience, making sound not just heard, but seen.

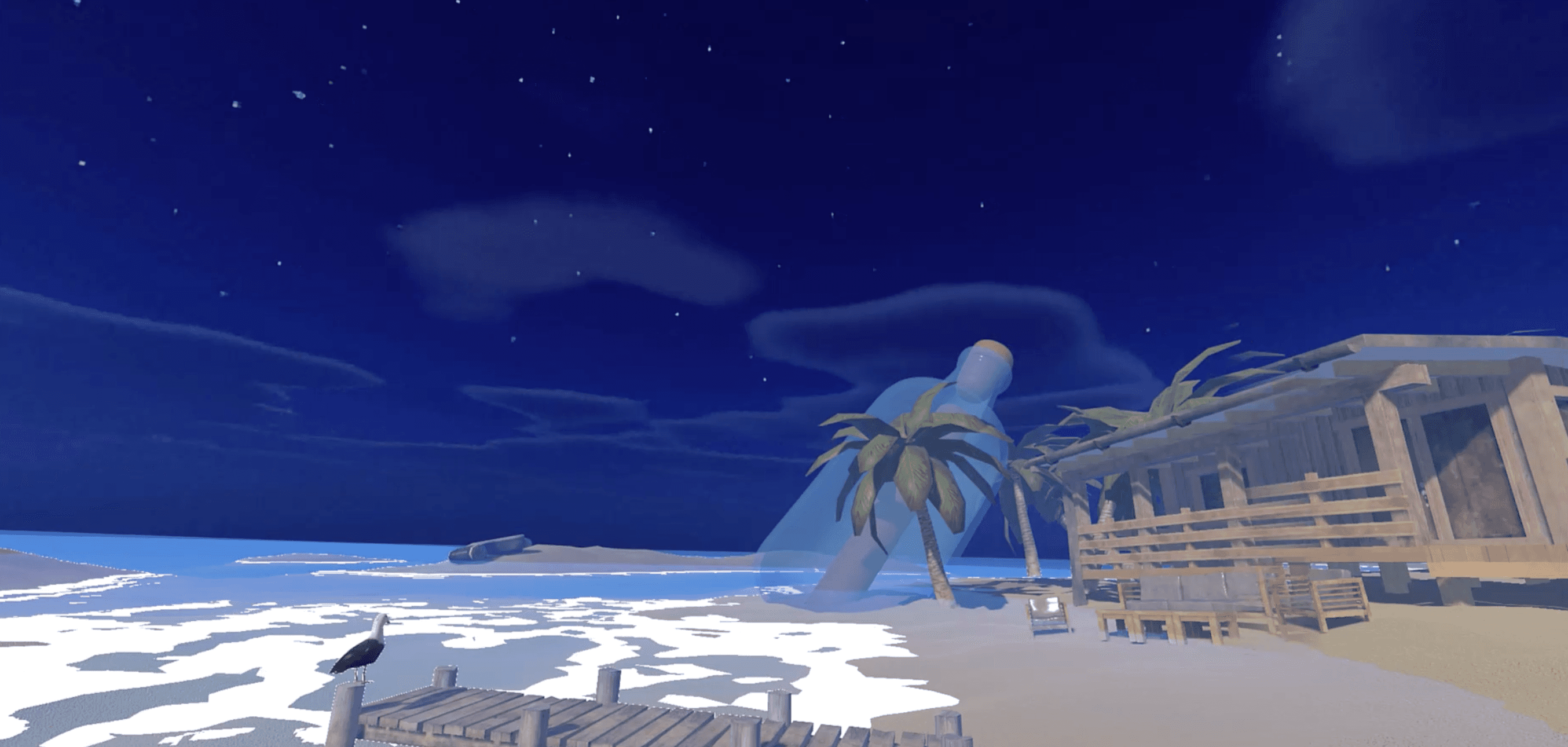

Concept Poster v.1

Concept Poster v.2

How To Play

1. Blow your instrument

2. Create bubble with your music

3. Bubbles pop on the wall

4. Paint your room with music

About

CONCEPT

Inspired by coloured hearing synesthesia, where tones evoke colors, JazzXR makes invisible sound visible, turning music into a living, evolving mural. Every performance leaves behind a unique visual imprint, helping musicians experience their music in a completely new way.

Goal

STRATEGY

Make music more memorable → Link sound to a visual representation that lingers

Encourage expressive practice → Help musicians see how they shape their sound

Bridge the gap between sound and sight → Develop a new way of learning music that isn’t just auditory

Turn practice into an artistic process → Let musicians create something tangible from their sound

Design Process

GAME DESIGN / RESEARCH

1. User Research & Journey

User Target

User Journey

2. Ideation

StoryBoard

Design Principle

GameFlow

3. Prototype

Prototype Versions

Wall Detection Prototype

Noise Detection Prototype

Bubble Variation Test

Playtest

V.01

V.20

Element

Each bubble in JazzXR is unique, shaped by the way the saxophone is played. The system listens in real-time and transforms the sound into colorful, expressive visuals, making each note feel personal and alive.

Here’s how the four key elements define the bubbles:

How Built

JazzXR is developed through a mix of research, sound analysis, and spatial computing, integrating multiple technologies to create an immersive music-visualization experience on Apple Vision Pro.

1. Research: Understanding Synesthesia & Musician Needs

We began by exploring coloured hearing synesthesia, where people associate specific tones and chords with colors. This helped us design a meaningful way to visualize sound based on real-world experiences of synesthetic musicians. We also conducted research on how instrumentalists, especially saxophonists, interpret sound visually in their minds, ensuring that JazzXR reflects their natural perception of music.

2. Real-Time Sound Detection

We use Essential JS to process live audio input from the saxophone. This allows us to detect:

Pitch & frequency → Assigns colors based on the note played

Note duration → Determines the bubble’s size and lifespan

Articulation (staccato/legato) → Affects the movement and behavior of bubbles

Volume & intensity → Controls bubble expansion and splash effect

3. Apple Vision Pro Development & Spatial Mapping

The app is built using Unity Pro Enterprise with PolySpatial, allowing seamless integration with Apple Vision Pro’s passthrough environment. We used:

Xcode → To build and optimize the app for visionOS

TestFlight → For testing on real devices, ensuring smooth interaction and performance

4. Connecting Visuals to Sound in Mixed Reality

By combining audio analysis with real-time 3D rendering, we map bubbles directly into the user’s space, making music feel physically present.